Layer 4-based redundancy

This page describes how Dante can be configured for layer 4-based redirection. For a general overview of fault tolerance and load balancing in Dante, see the main page on redundancy.

Layer 4 redirection

A port bouncer or similar application can perform layer 4 redirection of traffic, to achieve either fault tolerance, load balancing or both, by accepting connections and UDP data from the client on one socket and communicating with the Dante servers on a separate set of sockets.

In contrast with layer 3 redirection, where the kernel rewrites packets to make it seem for the client like it is communicating with the relay, with layer 4 redirection it actually does have the relay as a communication endpoint, and the redirection can be implemented in a user space application without any kernel support.

Layer 4 redirection is similar to layer 3 redirection, but has some notable differences. One benefit is simplicity, with no need to rely on kernel functionality or lower networking layer tricks, while a drawback is that if implemented via a user-space application, the system will have additional overhead that will reduce any potential scalability benefits from load balancing. This is because both the redirection software and the Dante servers, which are also user-space applications, will be subject to the same potential bottlenecks. Also, as noted in the redirection and load balancing considerations section above, barring any load balancing at the relay, traffic from the Dante nodes will need to pass through the relay, potentially making the relay a bottleneck.

The layer 4 redirection unit also becomes a potential source of failure, and would likely also benefit from some form of redundancy to prevent this.

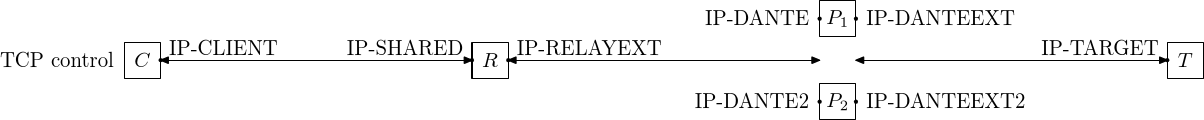

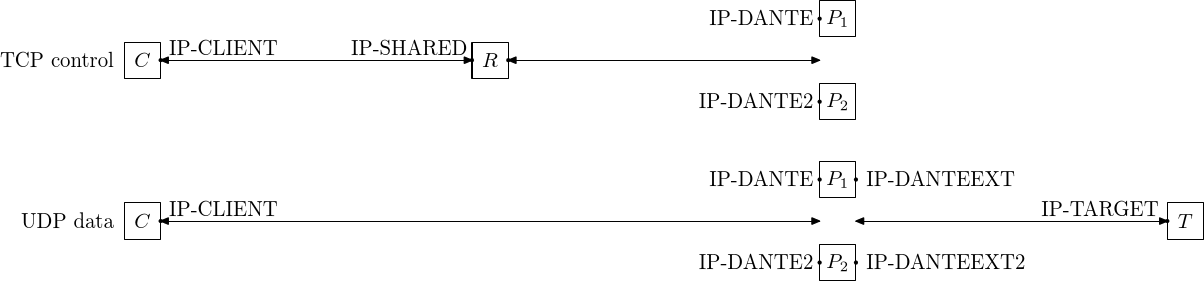

The connection endpoints in a layer 4 shared IP redirection fault tolerant/load-balanced configuration are shown in the figure above, for the TCP based commands: CONNECT and BIND. As with the layer 3 relay there is a relay node (R) that has the IP-SHARED address that the client has as its peer IP-address. There are here three separate connections, from the client to the relay, from the relay to a Dante server, and from Dante to the target server. The relay forwards traffic received on the IP-SHARED socket to the Dante server and traffic received on the IP-RELAYDEXT socket to the SOCKS client.

Depending on which node is communicating with a client, the following two connections pair alternatives exist:

- P1 used: IP-CLIENT to IP-SHARED, IP-RELAYEXT to IP-DANTE and IP-DANTEEXT to IP-TARGET

- P2 used: IP-CLIENT to IP-SHARED, IP-RELAYEXT to IP-DANTE2 and IP-DANTEEXT2 to IP-TARGET

Seen from the client, only the IP-DANTEEXT/IP-DANTEEXT2 keywords are different in the two configurations. For the TCP CONNECT/BIND commands, the IP-DANTE/IP-DANTE2 addresses are not visible, the address of the Dante server appears to be the shared IP-address IP-SHARED of the relay.

IP-DANTEEXT and IP-DANTEEXT2 will likely need to be different IP-addresses since there with layer 4 redirection is no shared address mechanism on the Dante server nodes. Both machines are independent, with the only relation being that both run Dante servers and that they are forwarded to by the proxy.

For UDP traffic, the socket endpoints are shown in the figure above, and in addition to the TCP control connection, there are also two separate UDP data socket pairs. As with the layer 3 redirection, the client communicates directly with the IP-DANTE/IP-DANTE2 addresses at the proxy machines when sending UDP data. This is due to the Dante server currently always using the same address as the TCP control connection was received on to receive UDP data. Since the Dante servers are bound to IP-DANTE/IP-DANTE2 and not IP-SHARED, the target address for UDP traffic returned to the clients will be the address that the server is bound to, resulting in UDP traffic going directly between the clients and the Dante servers and not via the relay.

With regards to load balancing, this is not a problem. Load balancing is achieved via server selection when the TCP control connection is established and since the UDP data goes to the same machine as the control connection, this traffic will essentially be load-balanced. The relay will not be become a bottleneck for UDP traffic, but might become one due to all TCP traffic passing through the relay, unless also it is load-balanced.

As for routing/firewall rules, UDP traffic being exchanged directly between the SOCKS clients and Dante means that the IP-DANTE/IP-DANTE2 addresses must be reachable by the clients. Direct communication via TCP is not necessary and direct TCP traffic between the clients and Dante servers can be dropped.

Example Dante configuration

A minimal Dante configuration can look like this (first Dante server):

errorlog: syslog

logoutput: /var/log/sockd.log

internal: IP-DANTE #IP-DANTE2 for second node

external: IP-DANTEEXT #IP-DANTEEXT2 for second node

client pass {

from: 0/0 to: 0/0

log: error connect disconnect

}

socks pass {

from: 0/0 to: 0/0

log: error connect disconnect

}

Example relayd(8) configuration

The following relayd(8) configuration will create a pool of two servers that are forwarded to, with server selection done based on the source IP-address. UDP traffic goes directly to the Dante servers, so the server selection algorithm does not need to take the UDP traffic into consideration, but the external address of the Dante servers will still be different for each host so using a source IP-address based hashing algorithm will reduce the possibility of client application interoperability problems (see section above on external address variation for more details).

/etc/relayd.conf:

table <relayd_relayservers> { 10.0.1.2, 10.0.1.3 }

relaysockd_port="1080"

relaysockd_addr="10.0.0.1"

relay "relaysockd" {

listen on $relaysockd_addr port $relaysockd_port

forward to <relayd_relayservers> port 1090 mode loadbalance check tcp

}

The configuration above uses an internal relayd(8) TCP connectivity check to monitor the Dante servers. This will detect the machine Dante running on going down, or the Dante server not running, but not any problems preventing Dante from working correctly. To use a more extensive check, that also verifies that connection forwarding works, the following script can be used:

/usr/local/bin/relaydsockdcheck.sh:

#!/bin/sh -

#

# relaydsockdcheck.sh - relayd "check script" script for SOCKS

#

#This script is meant to be called by relayd to check if a SOCKS

#server is running sufficiently well to be considered up.

#From the relayd.conf(8) manual: "relayd(8) expects a positive return

#value on success and zero on failure"

#Additionally: "the script will be executed with the privileges of the

# '_relayd' user and terminated after timeout milliseconds".

APP="relaydsockdcheck.sh"

SUCCESS=1 #"positive return value on success"

FAILURE=0 #"zero on failure"

SOCKSSERVER="$1"

if test x"$SOCKSSERVER" = x; then

exit $FAILURE

fi

SOCKSPORT=1080 #default SOCKS port

BINDIP="10.0.1.1" #target address for SOCKS test request

maxconn.pl -q -s "$SOCKSSERVER:$SOCKSPORT" -b "$BINDIP" -c 1 -E 1

if test $? -eq 0; then

return $SUCCESS

else

return $FAILURE

fi

The relaydsockdcheck.sh script contains the following values that might need to be adjusted during installation:

- BINDIP - The address on the relay (R) that the SOCKS forwarding test should attempt to connect to. It should be an address the Dante servers can open a connection to, such as IP-RELAYEXT.

- SOCKSPORT - Should be changed if Dante is running on a port different from the default 1080.

The script also needs maxconn.pl, which performs the actual communication with the SOCKS server and needs to be installed on the same machine as relayd(8).

table <relayd_relayservers> { 10.0.1.2, 10.0.1.3 }

relaysockd_port="1080"

relaysockd_addr="10.0.0.1"

relay "relaysockd" {

listen on $relaysockd_addr port $relaysockd_port

forward to <relayd_relayservers> port 1080 mode loadbalance check script "/usr/local/bin/relaydsockdcheck.sh"

}